You can now make your submissions to the test set of the SemEval 2021 task 6 shared task and get immediate feedback!

Memes are one of the most popular type of content used in an online disinformation campaign.

They are mostly effective on social media platforms, since there they can easily reach a large number of users.

Memes in a disinformation campaign achieve their goal of influencing the users through a number of rhetorical and psychological techniques, such as causal oversimplification, name calling, smear.

The goal of the shared task is to build models for identifying such techniques in the textual content of a meme only (two subtasks) and in a multimodal setting in which both the textual and the visual content are to be analysed together (one subtask).

Task Description

Background

We refer to propaganda whenever information is purposefully shaped to foster a predetermined agenda.

Propaganda uses psychological and rhetorical techniques to reach its purpose.

Such techniques include the use of logical fallacies and appealing to the emotions of the audience.

Logical fallacies are usually hard to spot since the argumentation, at first sight,

might seem correct and objective.

However, a careful analysis shows that the conclusion cannot be drawn from

the premise without the misuse of logical rules.

Another set of techniques makes use of emotional language to induce the

audience to agree with the speaker only on the basis of the emotional bond

that is being created, provoking the suspension of any rational analysis of the argumentation.

Memes consist of an image superimposed with text.

The role of the image in a deceptive meme is either to reinforce/complement a technique in the text or to convey itself one or more persuasion techniques.

Technical Description

We defined the following subtasks:

- Subtask 1 - Given only the “textual content” of a meme, identify which of the 20 techniques are used in it. This is a multilabel classification problem.

- Subtask 2 - Given only the “textual content” of a meme, identify which of the 20 techniques are used in it together with the span(s) of text covered by each technique. This is a multilabel sequence tagging task. The task is the combination of the two subtasks of the SemEval 2020 task 11 on "detecting propaganda techniques in news articles". Note that subtask 1 is a simplified version of subtask 2 in which the spans covered by each technique is not supposed to be provided.

- Subtask 3 - Given a meme, identify which of the 22 techniques are used both in the textual and visual content of the meme (multimodal task). This is a multilabel classification problem.

Note that subtask 1 is a simplified version of subtask 2 in which the spans covered by each technique is not supposed to be provided.

Moreover, the task is the combination of the two subtasks of the SemEval 2020 task 11 on "detecting propaganda techniques in news articles".

Data Description

The corpus is hosted on the shared task github page. Beware that the content of some memes might be considered offensive or too strong by some viewers.

We are releasing the data in batches.

Subscribe to the task mailing list and the Twitter accounts (see the bottom of the page) to get

notified when a new batch is available.

Note that, for subtask 1 and subtask 2, you are free to use the annotations of the PTC corpus (more than 20,000 sentences). The domain of that corpus is news articles, but the annotations are made using the same guidelines, altough fewer techniques were considered.

Note that, for subtask 1 and subtask 2, you are free to use the annotations of the PTC corpus (more than 20,000 sentences). The domain of that corpus is news articles, but the annotations are made using the same guidelines, altough fewer techniques were considered.

We provide a training set to build your systems locally.

We will provide a development set and a public leaderboard to share your results in real time with the other participants involved in the task.

We will further provide a test set (without annotations) and an online submission website

to score your systems.

Input and Submission File Format

The input data for task 1 and 2 is the text extracted from the meme.

The training, the development and the test sets for task 1 and 2 are distributed as json files, 1 single file per task.

The input data for task 3, in addition to the text extracted from the meme, is the image of the meme itself. The images are distributed together with the task 3 json in a zip file.

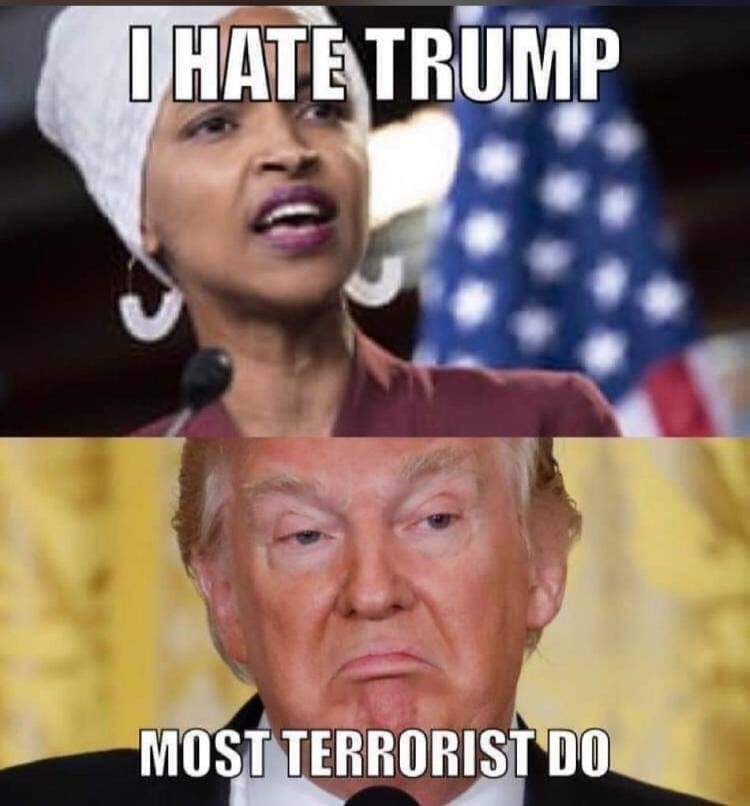

Here is an example of a meme:

Task 1

The entry for that example in the json file for Task 1 is

{

"id": "125",

"text": "I HATE TRUMP\n\nMOST TERRORIST DO",

"labels": [

"Loaded Language",

"Name calling/Labeling"

]

},

where

- id is the unique identifier of the example across all three tasks

- text is the textual content of the meme, as a single UTF-8 string. While the text is first extracted automatically from the meme, we manually post-process it to remove errors and to format it in such a way that each sentence is on a single row and blocks of text in different areas of the image are separated by a blank row. Note that task 1 is an NLP task since the image is not provided as an input.

- labels is a list of techniques (the list of valid technique names is available at the github repository of the project) used in text. Since these are the gold labels, they will be provided for the training set only. In this case two techniques were spotted: Loaded Language and Name calling/Labeling.

Task 2

The entry for that example in the json file for Task 2 is

{

"id": "125",

"text": "I HATE TRUMP\n\nMOST TERRORIST DO",

"labels": [

{

"technique": "Loaded Language",

"start": 2,

"end": 6,

"text_fragment": "HATE"

},

{

"technique": "Name calling/Labeling",

"start": 19,

"end": 28,

"text_fragment": "TERRORIST"

}

]

},

where the two fields id and text are identical to those for task 1.

Since task 2 requires, in addition to identifying the techniques, to also mark the text spans in which they occur, an entry of the field labels has now the following attributes:

- technique is the name of the technique. The list of valid technique names is available at the github repository of the task.

- start is the index of the starting character in text of the span related to technique. The character with index start is included in the resulting fragment. The index of the first character of text is 0. For example the span covered by Loaded language is the word HATE which starts at the character with index 2 in the text string "I HATE TRUMP\n\nMOST TERRORIST DO".

- end is the index of the ending character in text of the span related to technique. The character with index end is not included in the resulting fragment. For example the span covered by Loaded language is the word HATE which ends at the character with index 5 included in the text string "I HATE TRUMP\n\nMOST TERRORIST DO".

- text_fragment is the actual fragment covered by the instance of the technique. More specifically, text_fragment is the substring of text starting at the character of index start included and ending at the character of index end excluded. The value text_fragment can be used to verify that the substring indexing mechanism used for the experiments is correct.

A submission for task 2 is a single json file with the same format as the input file, but where only the fields id, labels (including the fields start, end, technique), for each example, are required.

Task 3

The input for task 3 is a json and a folder with the images of the memes.

The entry in the json file for the meme above is

{

"id": "125",

"text": "I HATE TRUMP\n\nMOST TERRORIST DO",

"labels": [

"Reductio ad hitlerum",

"Smears",

"Loaded Language",

"Name calling/Labeling"

],

"image": "125_image.png"

},

where image is the name of the file with the image of the meme in the folder.

The meaning of id, text and labels is the same as for task 1 (however the list of technique names is different).

Note that the field labels will be provided for the training set only, since it corresponds to the gold labels.

Notice, however, that now we are able to see the image of the meme, hence we might be able

to spot more techniques. In this example smears and Reductio ad hitlerum become

evident only after we are able to understand who the two sentences are attributed to.

There are other cases in which a technique is conveyed by the image only (see example with id 189 in the training set).

A submission for task 3 consists in a single json file with the same format as the input file, but where only the fields id, labels, for each example, are required.

Evaluation

The scorers for the three tasks can be downloaded from the github repository of the task. Here is a brief description of the evaluation measures the scorers compute.

Task 1 and Task 3

Task 1 and Task 3 are multi-label classification tasks.

The official evaluation measure for both tasks is micro-F1. We also report macro F1.

Task 2

Task 2 is a multi-label multi-class sequence tagging task.

We modify the standard micro-averaged F1, Precision and Recall, to account for partial matching between the spans.

The official evaluation measure for both tasks is micro-F1.

In addition, a micro-F1 value is computed for each propaganda technique.

How to Participate

- Register to submit your predictions on the leaderboards (follow the link on top).

-

You will get an email with your team passcode. In case you do not receive the email, after

checking your SPAM folder,

send us an email.

We recommed you write down the passocode (and bookmark your team page) so that you do not have to wait for the email to start working on the tasks.

We will use your email only to send you updates on the corpus or to let you know if we organise any event on the topic, we promise. - Use the passcode on the top-right box to enter your team page. There you can submit your runs and check your progress.

-

Submit your predictions on the test set to check your performance evolution.

Avoid submitting an abnormal number of submissions with the purpose of guessing the gold labels.

Manual predictions are forbidden; the whole process should be automatic.

The data may only be used for academic purposes.

Dates

The official SemEval 2021 timeline is published here.| Training data ready | |

| January 23rd | Test evaluation starts |

| January 30th | Registration closes |

| January 31st | Test evaluation ends |

| February 23rd | Paper submission deadline |

| March 29th | Notification to authors |

| April 5th | Camera ready papers due |

| Summer 2021 | SemEval 2021 workshop |

Contact

We have created a

google group

for the task. Join it to ask any question and to interact with other participants.

Follow us on twitter

to get the latest updates on the data and the competition!

If you need to contact the organisers only,

send us an email.

Organisation:

- Dimiter Dimitrov, Sofia University, Bulgaria

- Giovanni Da San Martino, University of Padova

- Hamed Firooz, Facebook AI

- Fabrizio Silvestri, Facebook AI

- Preslav Nakov, Qatar Computing Research Institute, HBKU

- Shaden Shaar, Qatar Computing Research Institute, HBKU

- Firoj Alam, Qatar Computing Research Institute, HBKU

- Bishr Bin Ali, King's College, London

This initiative is part of the Propaganda Analysis Project

Copyright © 2020. All Rights Reserved. Template by pFind Goodies