Although SemEval 2023 task 3 is over, you can still register, get the data, and submit your predictions on the test set on the leaderboard

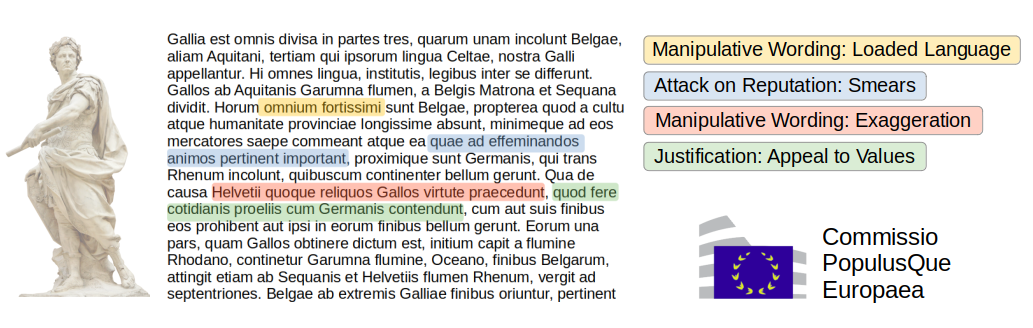

Julius Caesar didn't use an Appeal to Authority in his first page of "War of Gauls", however he used many other persuasion techniques. While he presented it as factual report of his military campaign, this book is now regarded as a propaganda piece to boost his career.

In order to foster the use of Artificial Intelligence to perform Media Analysis, we release in the frame of a SemEval 2023 shared task a new dataset covering several complementary aspects of what makes a text persuasive: the genre: opinion, report or satire the framing; what key aspects are highlighted the rhetoric: which persuasion techniques are used to influence the reader

We offer three subtasks on news articles in six languages: News Genre Categorisation, Framing Detection and Persuasion Techniques Detection. The participants may take part in any number of subtask-language pairs (even just one), and may train their systems using the data for all languages (in a multilingual setup). In order to promote the development of language-agnostic solutions, we will have two surprise languages for which we will release only test data.

Technical Description

While we give a brief description of the tasks here, we also share the annotation guidelines to give more detailed definitions, with examples, of the output classes for each task. If you want to cite the annotation guidelines, you can use this bibtex file.

Subtask 1: News Genre Categorisation

Definition: given a news article, determine whether it is an opinion piece, aims at objective news reporting, or is a satire piece. This is a multi-class (single-label) task at article-level.

Subtask 2: Framing Detection

Definition: given a news article, identify the frames used in the article. This is a multi-label task at article-level.

A frame is the perspective under which an issue or a piece of news is presented. We consider 14 frames: Economic, Capacity and resources, Morality, Fairness and equality, Legality, constitutionality and jurisprudence, Policy prescription and evaluation, Crime and punishment, Security and defense, Health and safety, Quality of life, Cultural identity, Public opinion, Political, External regulation and reputation. This taxonomy, as well as a discussion on the definitions of frame, For details on the definition of frame and the taxonomy used in our annotations, we followed (Card et al., 2015). Specifically,

Card et al., 2015. Dallas Card, Amber E. Boydstun, Justin H. Gross, Philip Resnik, and Noah A. Smith. 2015. The media frames corpus: Annotations of frames across issues. In ACL and IJCNLP (Volume 2: Short Papers), pages 438-444, Beijing, China.

Subtask 3: Persuasion Techniques Detection

Definition 1: given a news article, identify the persuasion techniques in each paragraph. This is a multi-label task at paragraph level.

| Category | Description | Techniques |

| Justification | an argument made of two parts: a statement and a justification | Appeal to Authority, Appeal to Popularity, Appeal to values, Appeal to fear/prejudice, Flag Waving |

| Simplification | a statement is made that excessively simplify a problem, usually regarding the cause, the consequence or the existence of choices | Causal oversimplification, False dilemma or no choice, Consequential oversimplification |

| Distraction | a statement is made that changes the focus away from the main topic or argument | Straw man, Red herring, Whataboutism |

| Call | the text is not an argument but an encouragement to act or think in a particular way | Slogans, Appeal to time, Conversation killer |

| Manipulative wording | specific language is used or a statement is made that is not an argument and which contains words/phrases that are either non-neutral, confusing, exaggerating, etc., in order to impact the reader, for instance emotionally | Loaded language, Repetition, Exaggeration or minimisation, Obfuscation - vagueness or confusion |

| Attack on reputation | an argument whose object is not the topic of the conversation, but the personality of a participant, his experience and deeds, typically in order to question and/or undermine his credibility | Name calling or labeling, Doubt, Guilt by association, Appeal to Hypocrisy, Questioning the reputation |

For this subtask we consider 23 persuasion techniques, although the actual number of techniques per language may vary slightly (see Data Description section). For a detailed description of the techniques, refer to the annotation guidelines.

Data Description

We provided a training set to build your systems locally. We further provide a development set (without annotations) and an online submission website to score your systems. A public leaderboard will show the progress on the task of the researchers involved in the task.

The data is unique in its kind as it is both multilabel (elements of a given sentence may be tagged with different labels), multilingual, and it also covers complementary dimensions of what makes text persuasive, namely style and framing. Finally, a revised and updated fine-grained taxonomy of persuasion techniques is used.

Input Articles

The input for all tasks will be news and web articles in plain text format. After registrations, participants will be able to download from their team page the corpus. Specifically, articles are provided in the folders train-articles-subtask-x. Further, we provide a set of dev-articles-subtask-x for which annotations are not provided.

Each article appears in one .txt file. The title (if it exists) is on the first row, followed by an empty row. The content of the article starts from the third row.

Articles in six languages (English, French, German, Italian, Polish, and Russian) are collected from 2020 to mid 2022, they revolve around a fixed range of widely discussed topics such as COVID-19, climate change, abortion, migration, the build-up leading to the Russo-Ukrainian war, and events related and triggered by the aforementioned war, and some country-specific local events such as elections, etc. Our media selection covers both mainstream media and alternative news and web portals, large fraction of which were identified by fact-checkers and media credibility experts as potentially spreading mis-/disinformation. For the former, we used various news aggregation engines, like for instance Google News or the Europe Media Monitor (EMM), a large-scale multi-lingual near real-time news aggregation and analysis engine, whereas for the latter, we use online services, such as NewsGuard and MediaBiasFactCheck, which rank sources according to their likelihood of spreading mis-/disinformation. We further remove near duplicates and articles originating from blocked web sites. Articles whenever possible were retrieved with the Trafilatura library or other similar web-scraping tools, and otherwise were retrieved manually.

Here is an example article (we assume the article id is 123456):

| 1 | Manchin says Democrats acted like babies at the SOTU (video) Personal Liberty Poll Exercise your right to vote. |

| 3 | Democrat West Virginia Sen. Joe Manchin says his colleagues’ refusal to stand or applaud during President Donald Trump’s State of the Union speech was disrespectful and a signal that the party is more concerned with obstruction than it is with progress. |

| 4 | In a glaring sign of just how stupid and petty things have become in Washington these days, Manchin was invited on Fox News Tuesday morning to discuss how he was one of the only Democrats in the chamber for the State of the Union speech not looking as though Trump killed his grandma. |

| 5 | When others in his party declined to applaud even for the most uncontroversial of the president’s remarks, Manchin did. |

| 6 | He even stood for the president when Trump entered the room, a customary show of respect for the office in which his colleagues declined to participate. |

Notice that numbers on the left, indicating the index of the paragraph, are not present in the original article file, we have added them here in order to be able to reference paragraphs. The text is noisy, which makes the task trickier: for example in row 1 "Personal Liberty Poll Exercise your right to vote." is clearly not part of the title.

There are several persuasion techniques that were used in the article above:

- The fragment “babies” on the first line (characters 34 to 40) is an instance of Name Calling/Labeling

- On the third line the fragment “the party is more concerned with obstruction than it is with progress” is an instance of False dilemma

-

The fourth line has multiple propagandistic fragments

- “stupid and petty” is an instance of Loaded Language;

- “not looking as though Trump killed his grandma” is an instance of Exaggeration/Minimisation

- “killed his grandma” is an instance of Loaded Language

Gold Labels and Submission Format

Subtask 1 - News Genre Categorisation

The format of a tab-separated line of the gold label and the submission files for subtask 1 is:

article_id label

where article_id is the numeric id in the name of the input article file (e.g. the id of file article123456.txt is 123456), label is one the strings representing the three genres: reporting, opinion, satire. This is an example of a section of the gold file for the articles with ids 123456 - 123460:

123456 opinion 123457 opinion 123458 satire 123459 reporting 123460 satire

partial view of a gold label file for subtask 1

Subtask 2 - News Framing

The format of a tab-separated line of the gold label and the submission files for subtask 2 is:

article_id label_1,label_2,...,label_N

where article_id is the numeric id in the name of the input article file (e.g. the id of file article123456.txt is 123456), label_x is one of the strings representing the frames that are present in the articles: Economic,Capacity_and_resources,..., Other. This is an example of a section of the gold file for the articles with ids 123456 - 123460:

123456 Crime_and_punishment,Policy_prescription_and_evaluation 123457 Public_opinion 123458 Legality_Constitutionality_and_jurisprudence,Security_and_defense 123459 Health_and_safety,Quality_of_life,Cultural_identity 123460 Public_opinion

partial view of a gold label file for subtask 2.

Subtask 3

The format of a tab-separated line of the gold label and the submission files for subtask 3 is:

article_id paragraph_id technique_1,technique_2,...,technique_N

where article_id is the identifier of the article, paragraph_id is the identifier of the paragraph, technique_1,technique_2,...,technique_N is a comma-separated list of techniques that are present in the paragraph. This is the gold file for the article above, article123456.txt:

123456 1 Name_Calling-Labeling 123456 3 False_Dilemma-No_Choice 123456 4 Loaded_Language,Exaggeration-Minimisation 123456 5 123456 6

gold label file for subtask 3: article123456-labels-subtask-3.txt

Notice that the indices of the paragraphs start from one and the empty paragraphs are skipped (in the example the index 2 is missing). For the training set we provide one gold file per article as well as a single gold file with all gold labels for all files. The participants are expected to upload one file for all the articles, with as many rows as the number of non-empty paragraphs in all articles.

In order to avoid issues due to paragraph splitting, we provide .template files: they are 3 columns files, where the first two columns are identical to the gold files, i.e. they provide the article_id and paragraph_id, while the third column features the text of the corresponding paragraph.

Furthemore, for the datasets for which we release gold labels, we also provide the span level annotations.

Evaluation

Upon registration, participants will have access to their team page, where they can also download scripts for scoring the different tasks. Here is a brief description of the evaluation measures the scorers compute.

Subtask 1

Subtask 1 is a multiclass classification problem. We use macro-F1 as the official evaluation measure.

Subtask 2

Subtask 2 is a multiclass classification problem. We use micro-F1 as the official evaluation measure.

Subtask 3

Subtask 3 is a multilabel classification problem. We use micro-F1 as the official evaluation measure. The official score that will appear on Leaderboard will be computed using the 23 fine-grained persuasion technique labels. On top of this, an evaluation at the coarse-grained level will be computed too, i.e., mapping the labels to the 6 persuasion technique categories (see above) and this will be communicated to the participating teams.